Delivering live and on-demand video at scale requires far more than compute and storage. Modern streaming platforms must combine security, performance, high availability, and global reach—all while supporting hybrid environments where video originates from on-premises infrastructure but is distributed through the cloud.

At Automat-it, our team designed and built the AWS-based streaming foundation for freeTV, a hybrid cloud platform delivering live and VOD content to viewers worldwide. This article provides a technical deep dive into the architecture. It explains how we combined multi-account governance, centralised networking with Palo Alto firewalls, AWS Direct Connect, Amazon CloudFront, and AWS security services to achieve a secure and resilient solution.

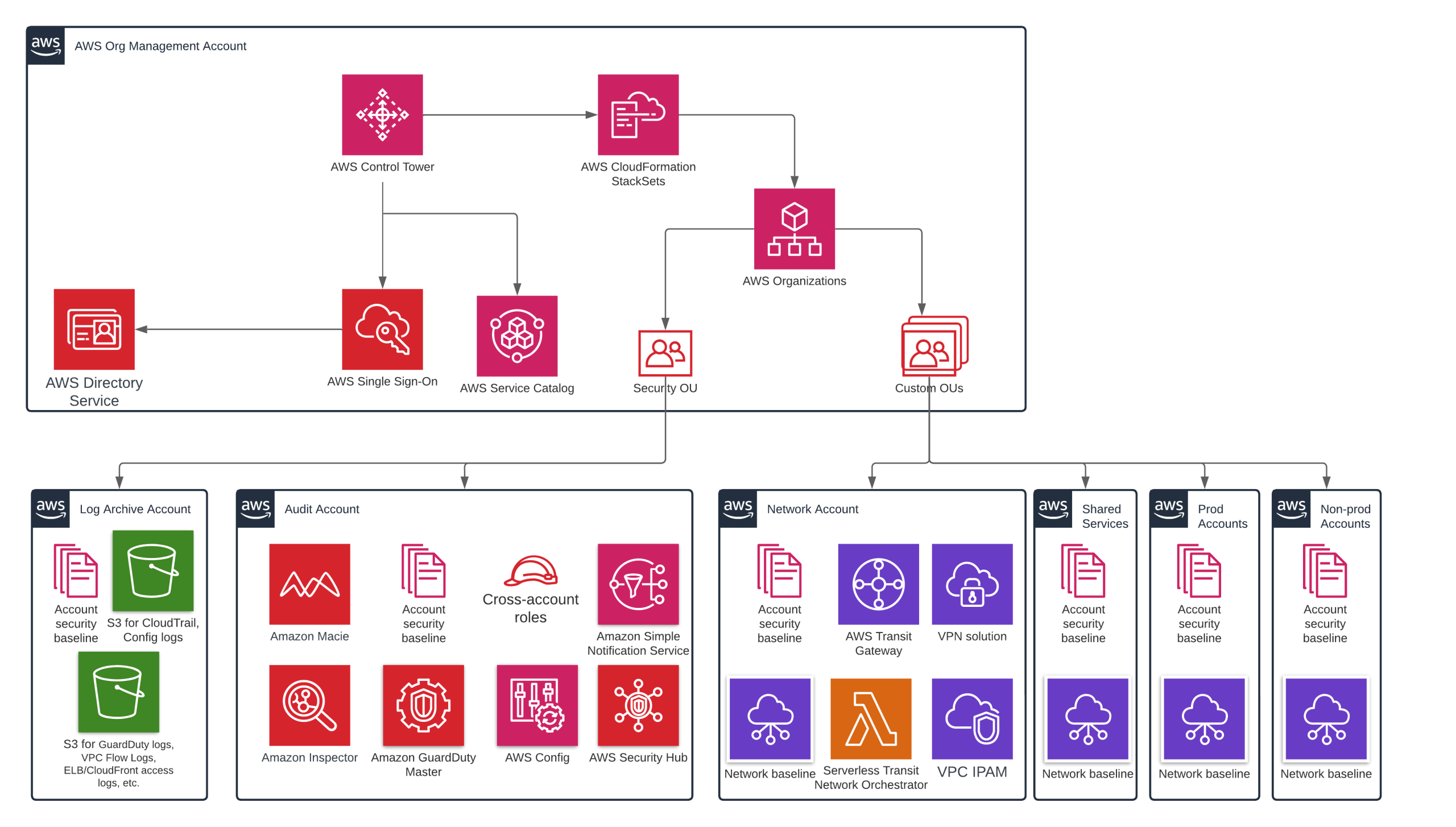

Multi-Account AWS Landing Zone: Foundation for Security & Governance

A strong streaming platform begins with a robust AWS organizational model.

freeTV operates on a multi-account AWS Landing Zone, which is implemented using AWS Control Tower. Each account has a clear, single responsibility, ensuring isolation, compliance, and operational clarity.

Core benefits of this model

- Blast radius reduction: A compromise in one workload does not affect others.

- Cost transparency: Each workload is assigned to its own account for accurate FinOps reporting.

- Policy enforcement: Governance is enforced using Service Control Policies (SCPs) and AWS Config rules

Key accounts in this architecture

- Logging account – stores logs from AWS CloudTrail, AWS Config, VPC Flow Logs, etc.

- Audit account – contains Amazon GuardDuty, AWS IAM Access Analyzer, and AWS Security Hub. This account is a delegated administrator for AWS Security Services.

- Network account – central hub with all shared networking components (Transit Gateway, VPN, Firewalls, etc.)

- Workload accounts – each application runs in its own isolated environment.

- Shared services account – AWS Directory Service, which is used for SSO for AWS and VPN (Palo Alto GlobalProtect).

This layout establishes the foundation for zero-trust networking, compliance, and scalable growth as new workloads or regions are added.

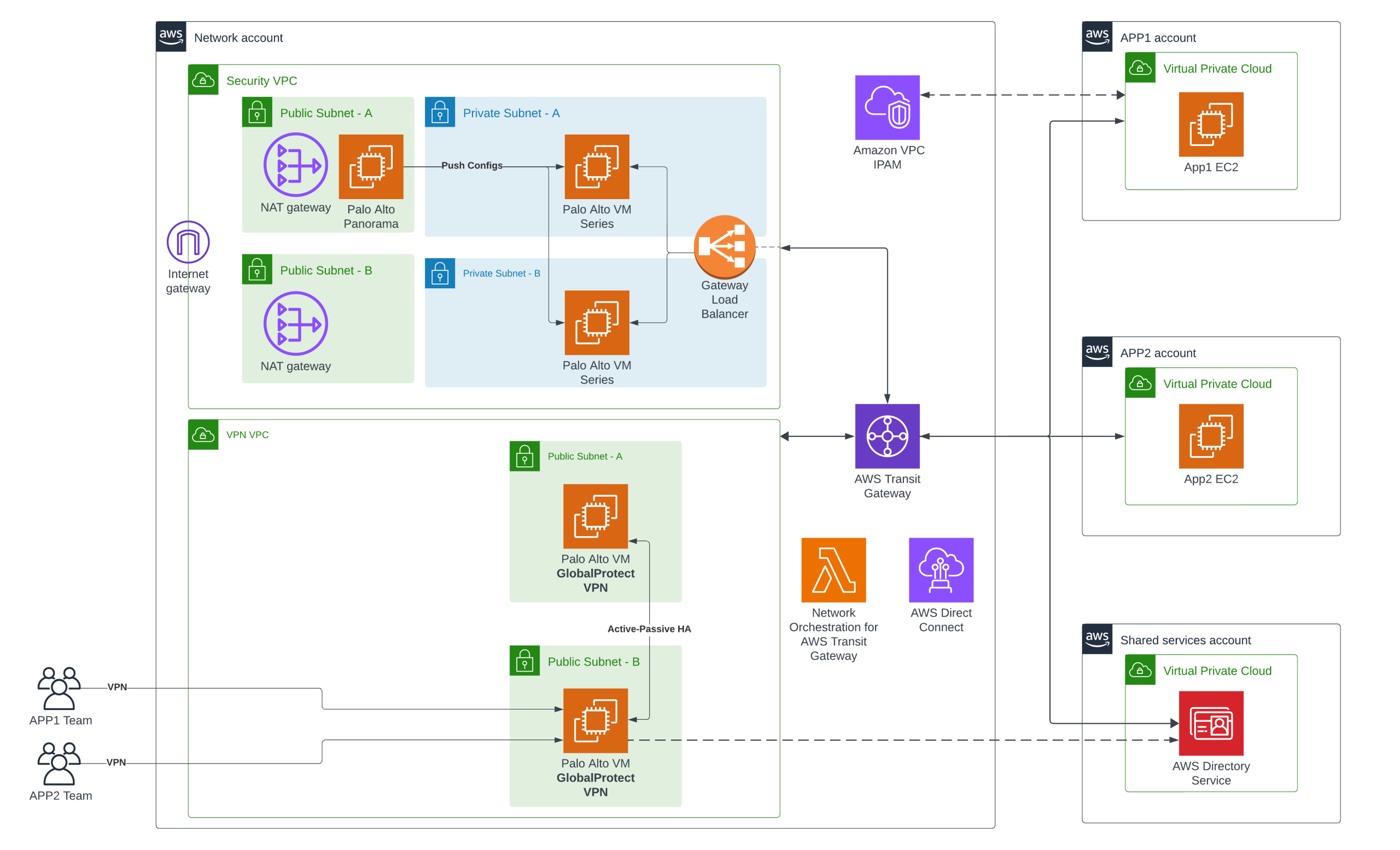

Centralised networking. Firewall and VPN

Network account contains:

- A shared Transit Gateway

- Network Orchestration for AWS Transit Gateway – an automation, which helps connect Amazon VPCs to the central Transit gateway and create appropriate routes

- Amazon VPC IP Address Manager (IPAM)

- Palo Alto firewall for centralised filtering for east-west (between VPCs) and north-south (to and from the internet) traffic.

- Palo Alto Panorama – a centralised management for Firewalls (a single point of configuration)

- Palo Alto GlobalProtect – Client VPN solution, which provides engineers with connectivity to private networks.

- AWS Direct Connect – for stable, high-performance, private connectivity between the AWS cloud and on-premises.

As freeTV has several different workloads with dedicated engineering teams, VPCs are isolated and can not reach each other directly. All traffic goes through the Firewall first. Every engineering team has private connectivity to their network only. This is achieved through Active Directory groups and Palo Alto GlobalProtect capabilities, which provide only specific routes according to security policies.

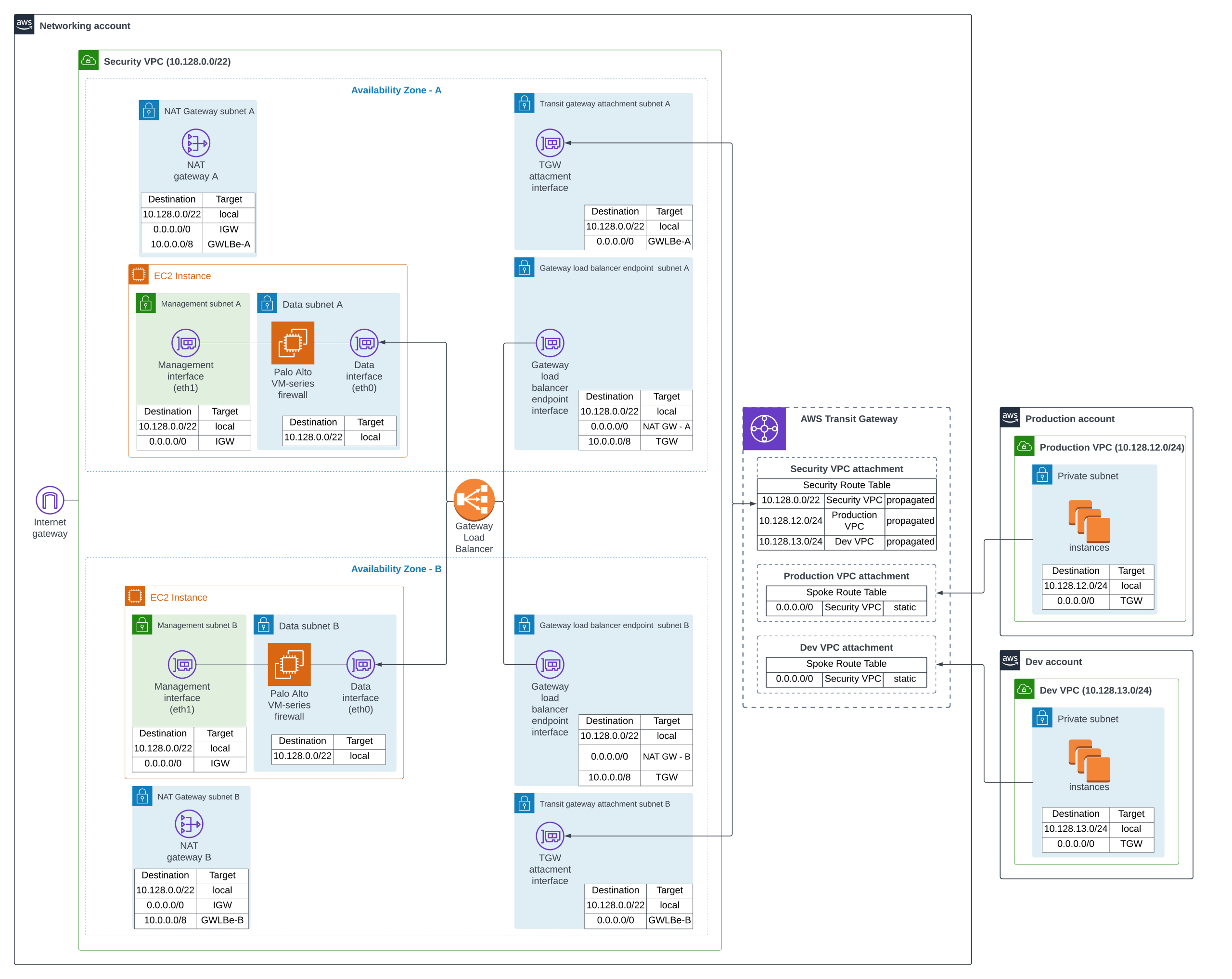

Traffic Flow Through Palo Alto Firewalls (North-South & East-West)

Palo Alto VM-series firewall is used for protecting the network and filtering ingress/Egress traffic. The security VPC, firewall, and Transit Gateway reside in the central networking account within a multi-account AWS environment (Landing Zone). Spoke VPCs are connected to the central Transit Gateway (with a default route to it), traffic will go through TGW, Gateway Load Balancer, to one of the Firewall VMs. If the traffic is allowed, it’s forwarded to the Internet through the NAT Gateway.

Here is a video with a high-level explanation of the architecture and packet flow.

The outbound traffic flow is as follows:

- A server located in the Production VPC sends a packet to the internet. The first route table on the way is a subnet RT with a default route 0.0.0.0/0 to the Transit Gateway.

- It goes to the “Production” TGW VPC attachment. The “Spoke Route Table” is associated with it. The default route 0.0.0.0/0 targets to the “Security VPC attachment”.

- The next hop will be to one of the “TGW attachment” subnet, which is attached to the TGW. The subnet is associated with the route table, where a default route 0.0.0.0/0 points to the VPC endpoint for a Gateway Load Balancer.

- The Gateway Load Balancer receives the packet and forwards it to a healthy VM firewall (data interface). Palo Alto VM performs Layer-7 inspection. If the traffic is allowed, it sends it back to the Load Balancer. (Keep in mind that the management interface is not used for data traffic. It is used only for SSH or HTTPS connection to the web UI for configuration purposes.)

- Once the Load Balancer receives back traffic, it returns it to the original endpoint (GWLBe subnet). How to get the internet here, look at the route table; the default route 0.0.0.0/0 goes to the NAT Gateway.

- The NAT Gateway is deployed in the “NATGW subnet”. The next hop in the route table is 0.0.0.0/0 via Internet Gateway.

- Then the traffic goes back from the internet to the NAT Gateway. Now the destination IP address is in the Production VPC 10.128.12.0/24. NAT Gateway subnet is associated with the route table, where the route will be 10.0.0.0/8 to the Gateway Load Balancer endpoint.

- Traffic comes to the Gateway Load Balancer and is automatically forwarded to the Palo Alto VM-series firewall (data interface).

- The firewall inspects the traffic and sends it back to the Load Balancer (to the original VPC endpoint).

- Traffic comes to the “GWLBe subnet”, and according to the route table entry 10.0.0.0/8 goes to the Transit Gateway.

- Traffic comes to the Transit Gateway attachment “Security-VPC”, and checks a route table where routes for all attached VPCs are already propagated. The next route is 10.128.12.0/24 to the TGW attachment “Production-VPC”

Benefits of this model

- Centralised inspection for all workloads

- Zero-trust by default

- No shadow paths or bypasses

- Consistent compliance and auditability

- Policies synchronised via Panorama

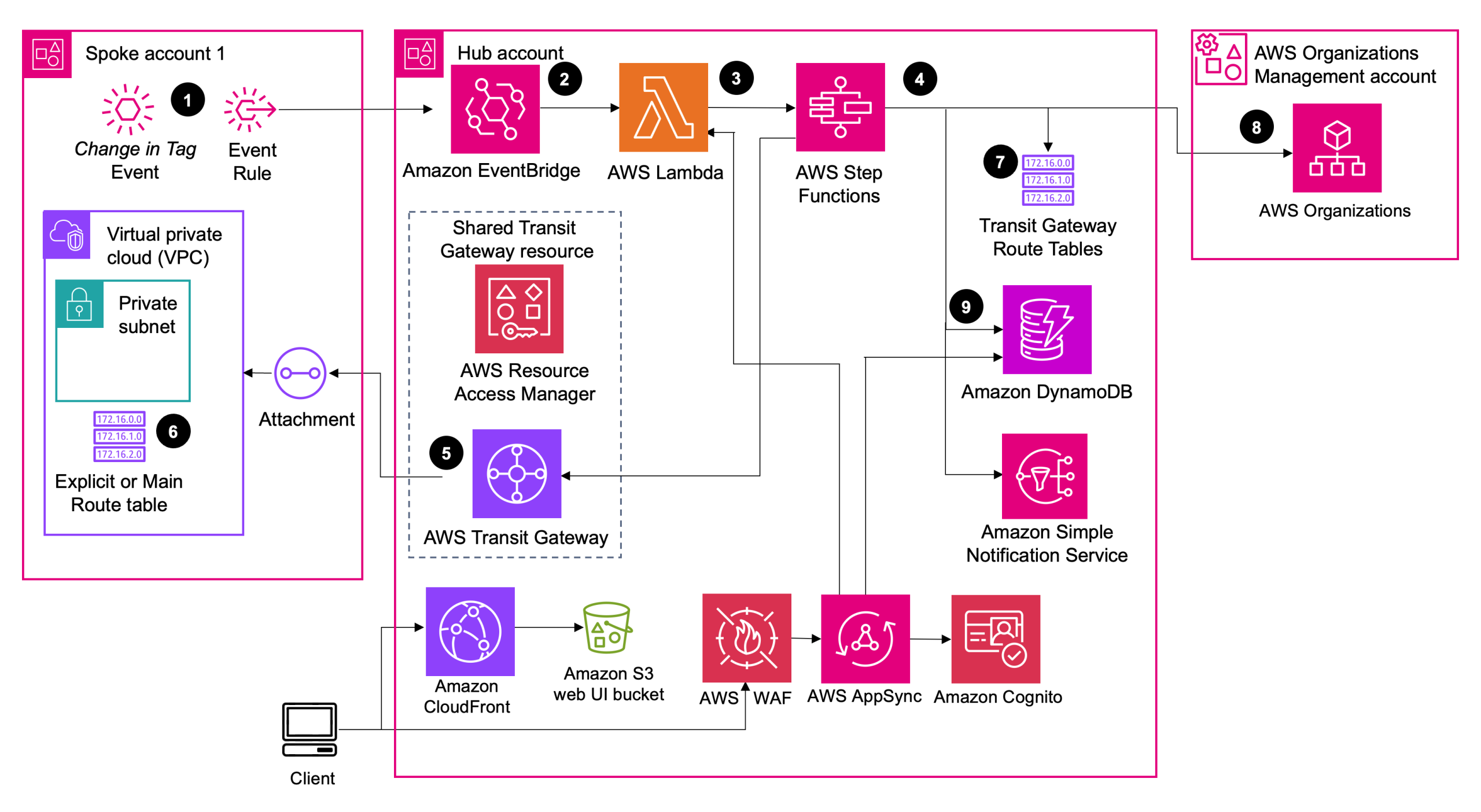

Network Orchestration for AWS Transit Gateway

The Network Orchestration for AWS Transit Gateway solution automates the process of setting up and managing transit networks in distributed AWS environments. This solution allows customers to visualise and monitor their global network from a single dashboard rather than toggling between Regions from the AWS Console. It creates a web interface to help control, audit, and approve transit network changes.

The solution follows a hub-spoke deployment model and uses the given workflow:

- An Amazon EventBridge rule monitors specific VPC and subnet tag changes.

- An EventBridge rule in the spoke account sends the tags to the EventBridge bus in the hub account.

- The rules associated with the EventBridge bus invoke an AWS Lambda function to start the solution workflow.

- AWS Step Functions (solution state machine) processes network requests from the spoke accounts.

- The state machine workflow attaches a VPC to the transit gateway.

- The state machine workflow updates the VPC route table associated with the tagged subnet.

- The state machine workflow updates the transit gateway route table with association and propagation changes.

- (Optional) The state machine workflow updates the attachment name with the VPC name and the Organizational Unit (OU) name for the spoke account (retrieved from the Org Management account).

- The solution updates Amazon DynamoDB with the information extracted from the event and resources created, updated, or deleted in the workflow.

freeTV Management applications

The management system includes:

- Customer onboarding

- Billing

- CRM

- Internal APIs

- End-user applications

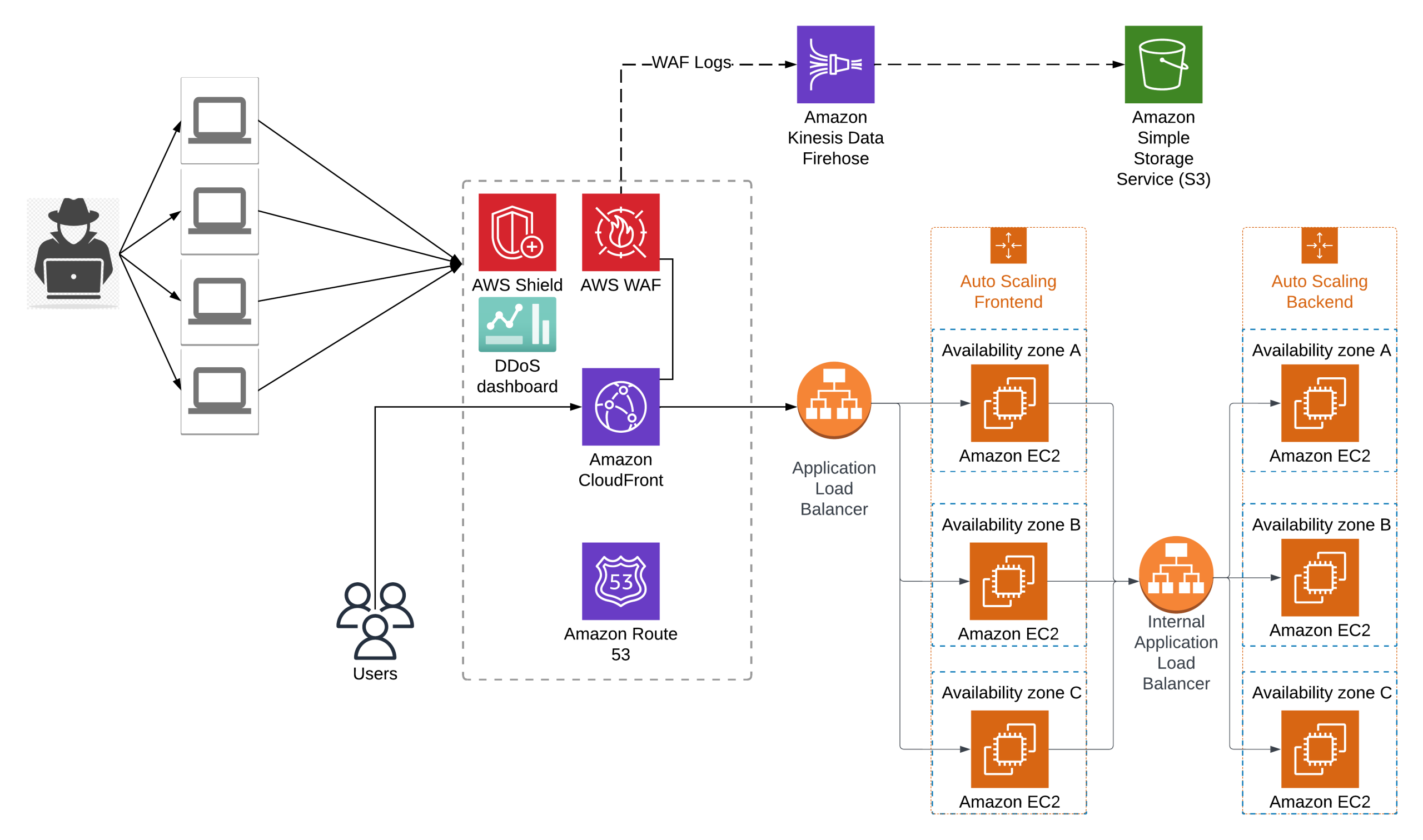

Architecture Components

- Backend: Runs on Amazon EC2 in private subnets behind an internal Application Load Balancer.

- Frontend: Runs on Amazon EC2 (Amazon EC2 Auto Scaling group) behind public Application Load Balancer.

- Content delivery: Amazon CloudFront distribution with:

- Application Load Balancer as origin

- AWS WAF (managed rules + custom rules)

- AWS Shield Standard for DDoS protection

Streaming applications

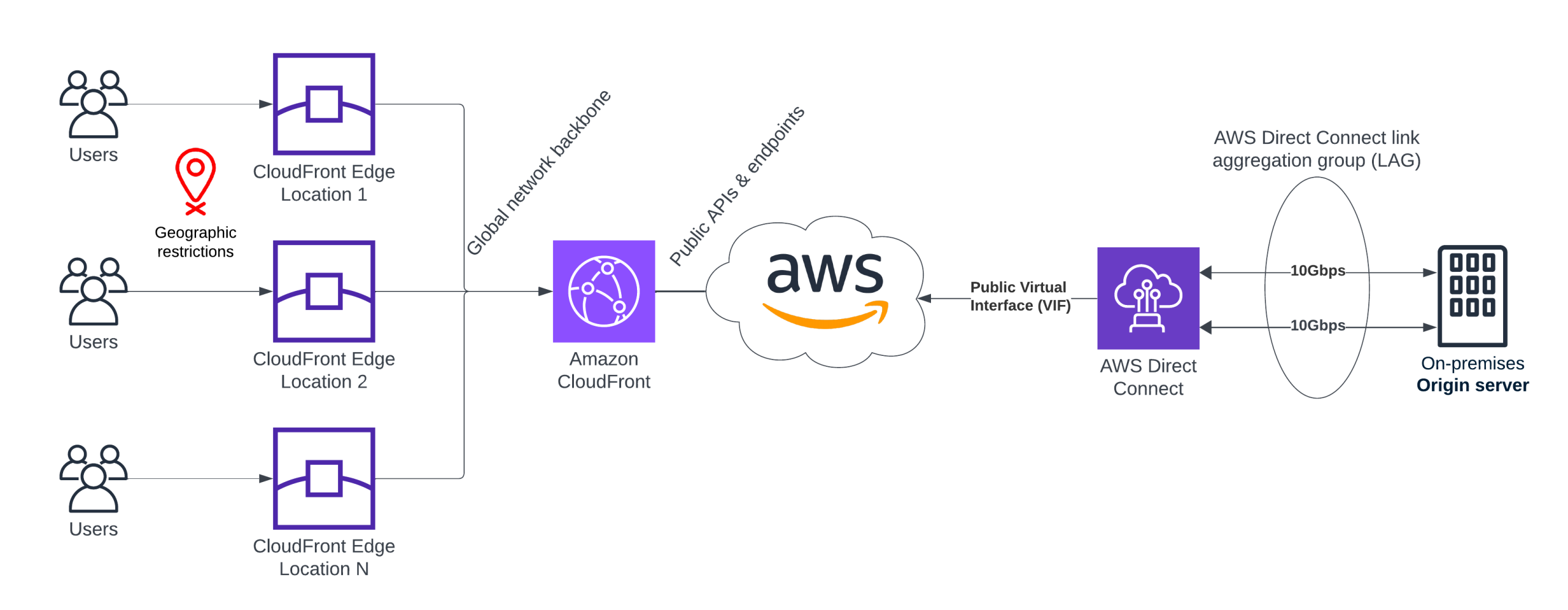

The streaming content itself originates from on-premises origin servers. This is where hybrid networking becomes crucial.

freeTV uses:

- Two 10 Gbps Direct Connect links

- Combined in a LAG (Link Aggregation Group)

- Active-active, providing 20 Gbps throughput and HA

Public Virtual Interface (Public VIF)

Since Amazon CloudFront is a public AWS service, traffic uses:

Direct Connect Public VIF → AWS Global Backbone → CloudFront

We do not expose on-prem servers to the public internet. Traffic is delivered securely over dedicated physical fiber.

Why Public VIF?

Because CloudFront origins can be:

- Amazon S3 (public endpoints)

- Application Load Balancer/Amazon EC2

- Or in this case, on-prem origin servers

Amazon CloudFront supports custom origin over Public VIF + DX for maximum performance. CloudFront distributes content from hundreds of edge locations worldwide.

Key outcomes:

- Low-latency delivery via edge caching. Origins are contacted only when needed.

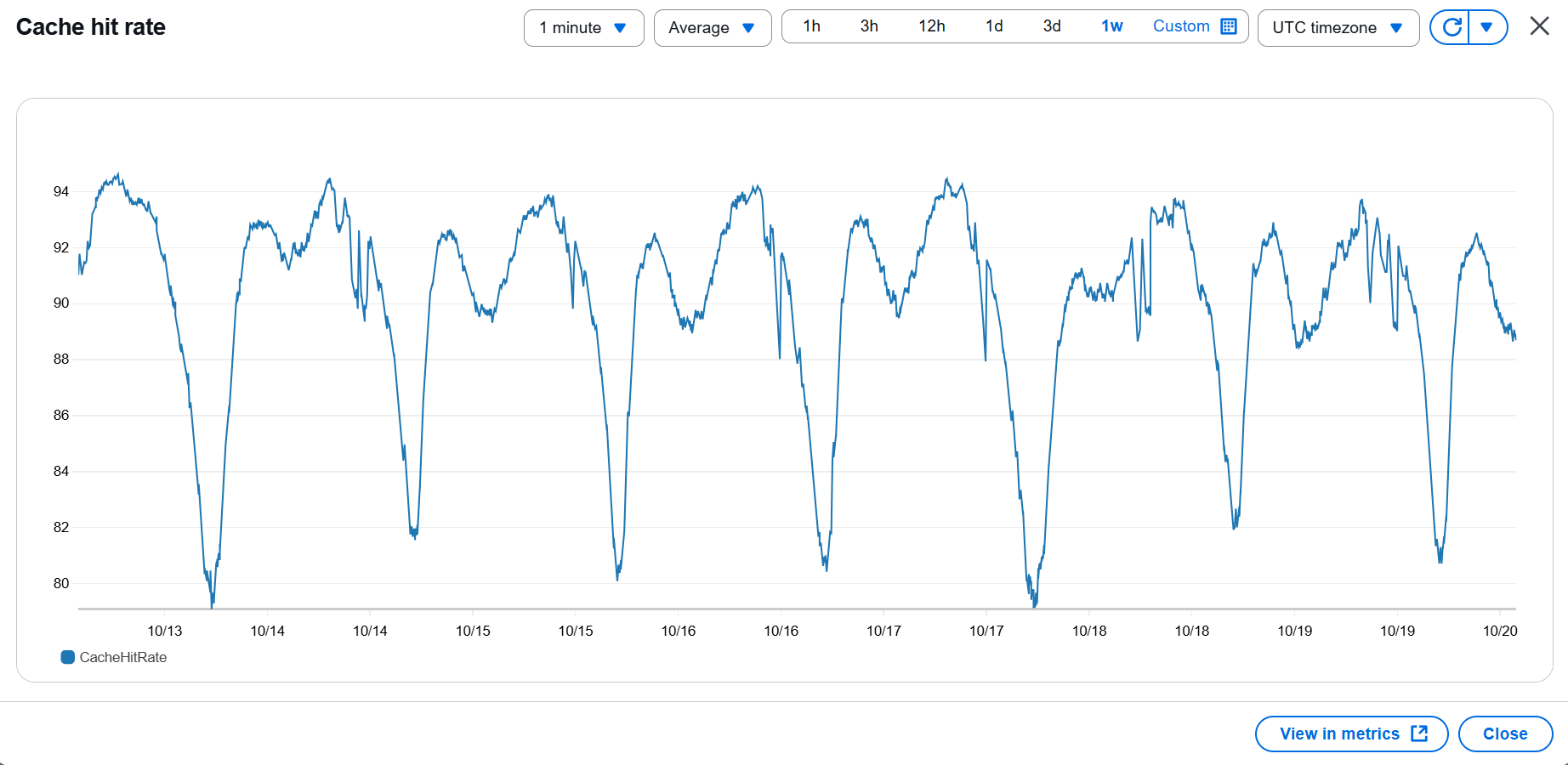

- 90–95% Cache Hit Ratio. Our monitoring shows consistently high CHR, with brief dips (~80%) when new content is uploaded and edges warm their cache.

- Geo-Restrictions. Amazon CloudFront natively blocks unwanted countries as an additional security layer.

- Peta-byte scale traffic. Monthly traffic volume reaches multiple petabytes, handled seamlessly by AWS’s global network backbone.

Conclusions

This project demonstrates how security, automation, governance, and performance can be combined to build a world-class streaming platform on AWS.

By integrating hybrid connectivity, centralised firewalls, global CDNs, and multi-account infrastructure, freeTV is now able to deliver content to users worldwide reliably at scale.